The image moderation tool reduces the amount of time spent moderating inappropriate content. You'll be able to focus on the content you actually do want to publish by automatically rejecting offensive content.

What is offensive content?

Adult content will be identified and categorized. Inappropriate images and videos will be blocked from the platform, while uncertain content will be placed on hold.

How does Automatic Image Moderation work?

Once you've activated the automatic image moderation setting, the platform will moderate your content and filter out offensive content. Suspected images and videos will be placed 'On Hold' for moderators to review.

Step #1 - ACTIVATION

You have the power to turn this feature ON or OFF for your account (it's OFF by default).

To activate this feature:

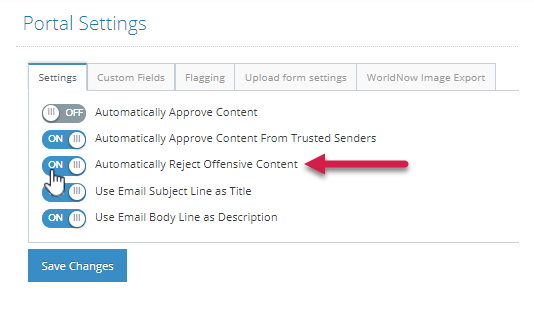

- Navigate to the Portal Settings and turn ON "Automatically Reject Offensive Content".

- Once activated, content will be moderated in real-time. It will take 1-2 minutes for the results to arrive in the platform.

If you have access to multiple partitions, this feature will need to be activated separately for each partition.

Step # 2 - PLATFORM MODERATION

Once you've activated "Automatically Reject Office Content", the platform will assign a category to each piece of content:

Category What happens next? Adult - Very Not LikelyContent sent to the Moderation Gallery for manual curation Adult - Not LikelyContent sent to the Moderation Gallery for manual curation Adult - PossibleContent put ON HOLD in the Moderation Gallery Adult - LikelyContent removed from the platform Adult - Very LikelyContent removed from the platform

Step # 3 - MODERATING 'ON HOLD' CONTENT

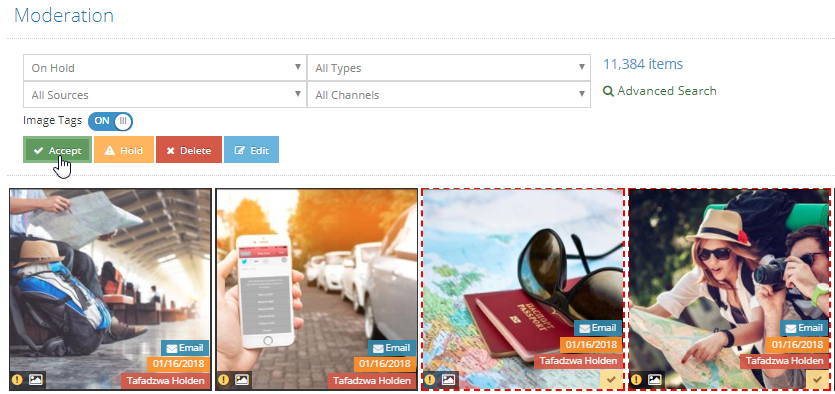

Moderators will need to periodically check the content placed "On Hold" to monitor Adult - Possible content.

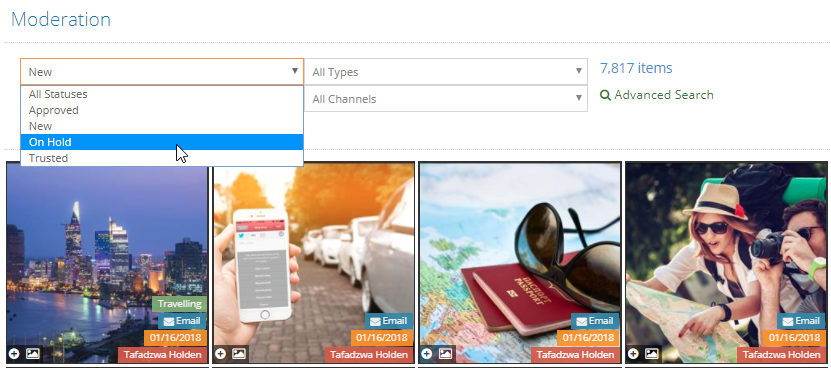

- From the Moderation Gallery, select "On Hold" from the filter drop down

- Moderate content which has been classified as "Adult- Possible" by accepting or deleting the image/video.

Tip!

Save time by moderating content in bulk.

.png?height=120&name=rockcontent-branco%20(1).png)